Dear experts @staff I am creating a new post, to solve a new doubt appeared after my other post “Human model - Gazebo” in order to better describe the problem.

At first I have created a scenario for Jibo recognizes the faces, assigned to it 3 cameras. And they are working properly taking the scenario correctly.

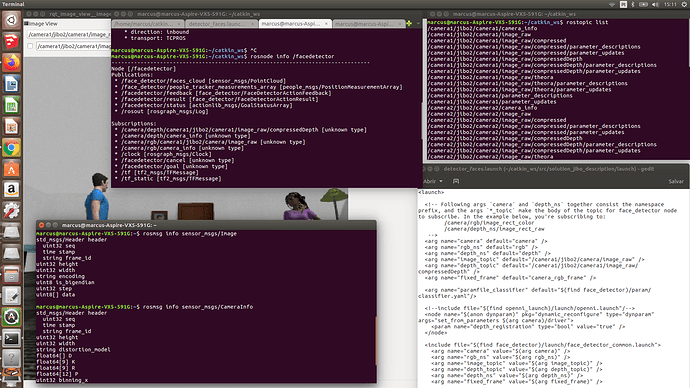

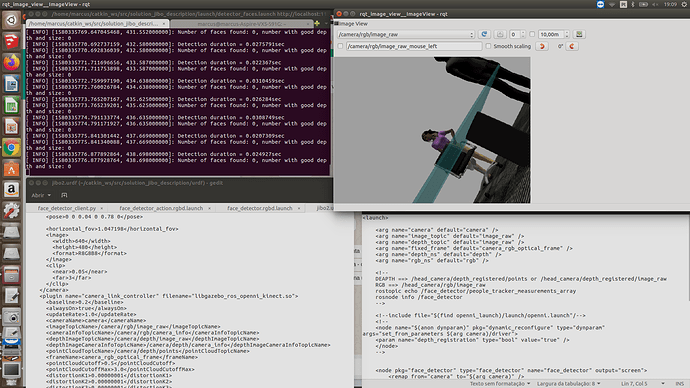

I am using the face_detector_rgb.launch in continuous mode, not with action, provided by: https://github.com/wg-perception/people/blob/9d99dbd8d9fa4124f270742eac2a5f12e829eeda/face_detector/launch/face_detector.rgbd.launch

So following the answer from https://answers.ros.org/question/210751/face_detector-not-detecting-with-rgbd-camera/? I tried in the same way to adjust the camera topic/info names. However, for me did not work (I think because I am using maybe a different robot or different camera plugin. I don’t know).

I am in doubt in which file should I change args or values. If in my face_detector_rgb.launch, as the previous link did, or in my URDF camera plugin. I think I am subscribing in the launch file to wrong topics and the system does not recognize. Well I am sending images, which can better displays for you what I tried to do, and then you can see and help me to find a solution…because I could not find where the mistake is.

The “error” above that I forgot to write camera1 in image_topic default…I corrected with no differente results…

More info:

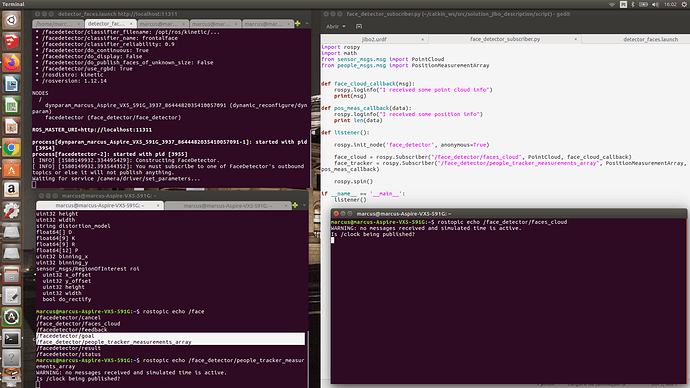

My subscriber

What sounds strange for me, and is different from the answer above in the linke is: the face detector topics are written differently: for goal, feedback, status is “facedetector” and for the people_tracker_array_measurements and faces_cloud is “face_detector”. Should this be a mistake in the code I used from github?

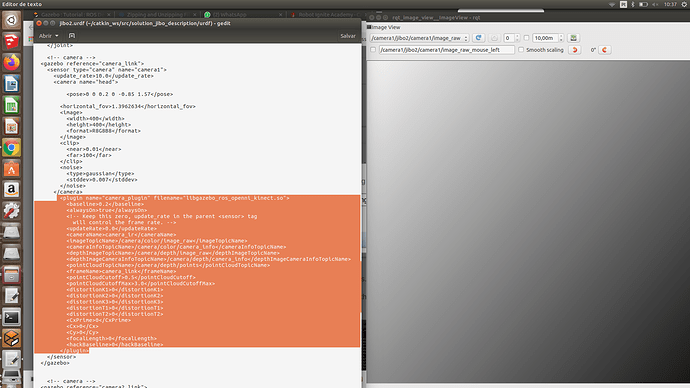

I have read this link https://wiki.ros.org/rviz/DisplayTypes/Camera. Which tells that I must have a camera info topic and a image topic in my plugin to subscribe the image files properly. And according to my URDF model script which you can see below.

There is an info topic and a image topic…so what is the error? What should I correct and try?

Ok, lets take it step by step:

- First things I see is that you are executing it localy. I would encourage you to create a ROSject in ROSdevelopement Studio, and share here the ROsject link. That wa we cn debug better the project beause we work in common ground.

- Have you checked the face detection and feca recognition chapters in Perception in 5 days course here in RobotIgniteAccademy?

Try it first and tell us, because complex project are very difficult to debug if you dont concentrate the error into dthe simplest scenario possible ( 1 camera, rgb, working example… )

1 Like

Hi @duckfrost , thanks for the feedback!

Yes, I preferred to use my local system, because I was facing compatibility issues in ROS Development studio, and the memory and CPU capabilities limits were achieved very quickly with the scenario I have developed. Is there another way to share my code suitable for you? for example Github or email? If not I will try to solve the pkgs, dependencies incompatibilities in ROS development studio and reduce my scenario complexity…

I am happy for the advice to course ROS perception, I intend in the future to try it. However, this project belongs to ROS for Beginners - URDF, in this way I did not know it was necessary to course other modules to complete it.

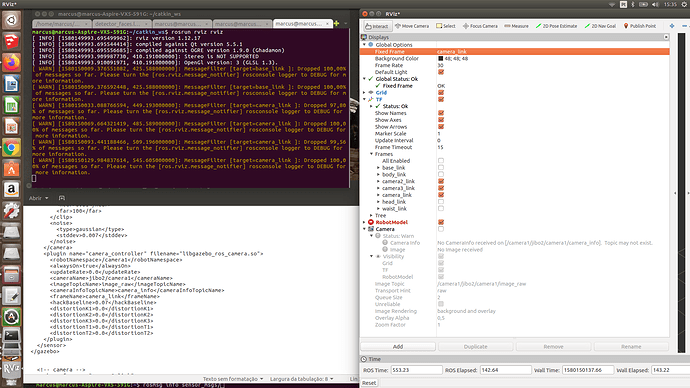

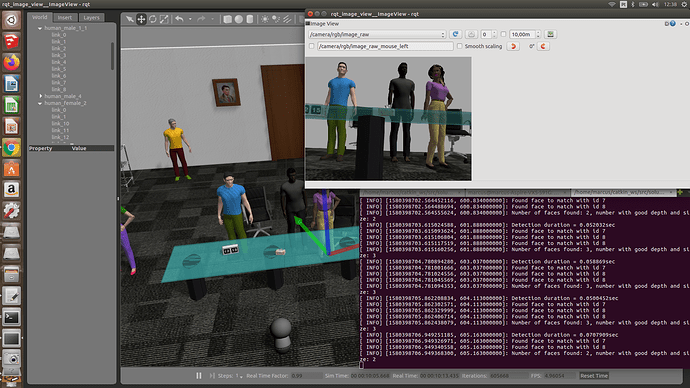

And for last, my friend told me that maybe the problem is that I was using a camera plugin without the “depth” feature. So I tried to change this plugin to this Kinect: http://gazebosim.org/tutorials/?tut=ros_depth_camera

However with no success, as can be seen below:

I am reading a lot about Gazebo plugins and ROS topics communication, but it is not being easy to fix this issue. So…in ROS Perception, which class exactly could I find a background to help me with this specific problem?

Thanks.

Hi,

So in the perception course you have a simulation ( fetch robot ) usng facer detection software, and in the next unit ( unit 5 I believe ) yu have face recognition using deeplearning on the background. The Facer recognition only needs an RGB camera, nothin more. The thing is that if you want a spatial recognition ( get the position of the detection ) then you need also a deapth sensor and use the face detection.

In this URDF exercise those requirements in the project are just to encourage you to do just exacty what you are doing. Here you can see that creating aproject by your own, wthout any premade code is a big challenge.

Try the perception course, the part of facer recognition and you will see that you can face this project a bit better.

1 Like

Ok, thanks very much to provide me the path!

I did not know that just a RGB was enough!

Just one last question? I am trying to do the “extra exercises” from each chapter because I am trying to prepare more for the final exam…

But do you think just the basics from each course is enough to begin the exam? maybe I should try the exam without going deeper into these extra exercises. What advice could you provide regarding this? Beacause I am worried in do not complete the exam for example if a kind of “face detector” or that complex extra moves for Gurdy robot be required on exam…

Thanks in advance!

Well I am happy after 15 hours today over the computer I could finally couple the urdf-plugin-face_detector_node…

Thanks for the hint above @duckfrost…this night I can sleep in peace with ROS hahahaha

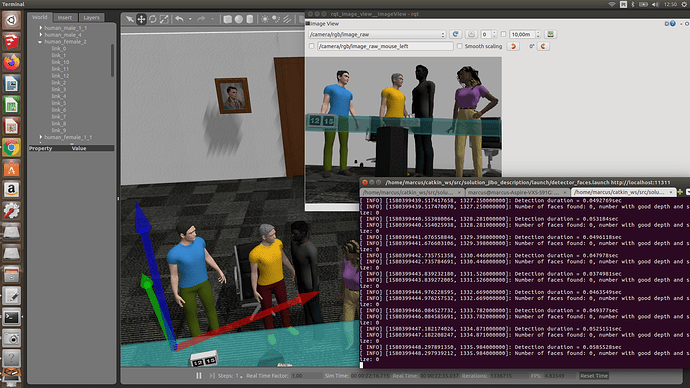

I think now it is just adjust the camera pose (which desconfigured the pose from the other plugin pose…maybe because it is positioned in a different way…I don’t know)… in order to take the faces properly, isn’it? The face_detector node cannot identify faces with the camera upside down?

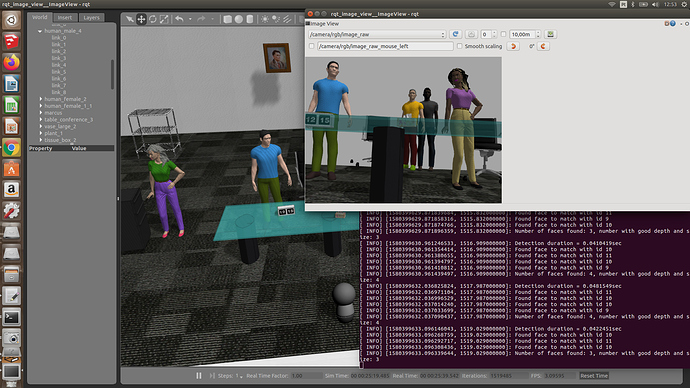

hmmm…I think there is a limitation of depth or/and recognize the side face (lateral) of a human…the robot just detected the humans when they were with their faces in front of the camera position or near it. What parameters should I modify to detect the side of the faces (Because I am imagining a situation where a pedestrian is crossing a pedestrian crossing after near a traffic light).

Identified: Side Limitation:

Not Limitation on depthness